Mo Data, Mo Problems

Why Data Abundance Produces Decision Failure

Data Alone is not Enough

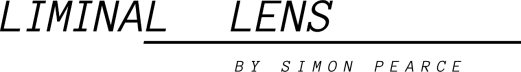

Global society is currently experiencing an explosion in data and data-analysis capacity, yet simultaneously facing a moral panic that people are becoming less intelligent, less able to process information into coherent thought and effective actions.

This is a case of epistemic overload at the civilizational level. By this, I mean that the mental models that people use to process all the information being thrown at them simply can’t keep up. People don’t know what’s important and what should be ignored, or how to interpret all of the conflicting signals into a clear picture for themselves.

To make matters worse, a torrent of information is coming from many global actors with different objectives: businesses, governments, NGOs, and political parties are all trying to use spectacle to hijack people's attention and get their dollars, votes, and loyalty. Some of it is signal; a lot of it is noise. People often lack the frameworks to tell the difference. A lot of people are descending into distrust, conspiracies, and paranoid thinking as a result. Others are simply checked out into pure hedonism. Some oscillate between hedonism and rage: a common pattern in a confusing era.

In the modern digital media economy, people are being manipulated by power brokers of various kinds, not treated as human beings deserving dignity and respect. This tendency to instrumentalize the population has always existed; what’s different now is the unprecedented access that a variety of businesses, governments, and even military organizations have to the minds of ordinary civilians. For example, the CCP and Russia have more ways to communicate rapidly with Americans and Europeans today than their own governments did 100 years ago.

Institutions have this Problem, too

Institutions gather more and more data, but models periodically fail to detect the implications of shifts in the underlying data over time; this is what Nassim Nicholas Taleb calls fat-tailed risk. Fat tails are events that don’t happen very often, but when they do, they can be extremely disruptive or outright catastrophic.

Unmodeled external risks are unavoidable because all models are closed systems defined by underlying assumptions. These assumptions function as axioms: they set the boundaries of what the model can perceive and process. When reality violates those axioms, the model cannot reliably detect the breach, since it presupposes their stability. Many such axioms are implicit rather than formally stated, which makes their failure harder to see. To restore alignment with reality, someone with decision authority must intervene to update the model’s assumptions or construct a new model with revised premises, data inputs, and analytical frameworks.

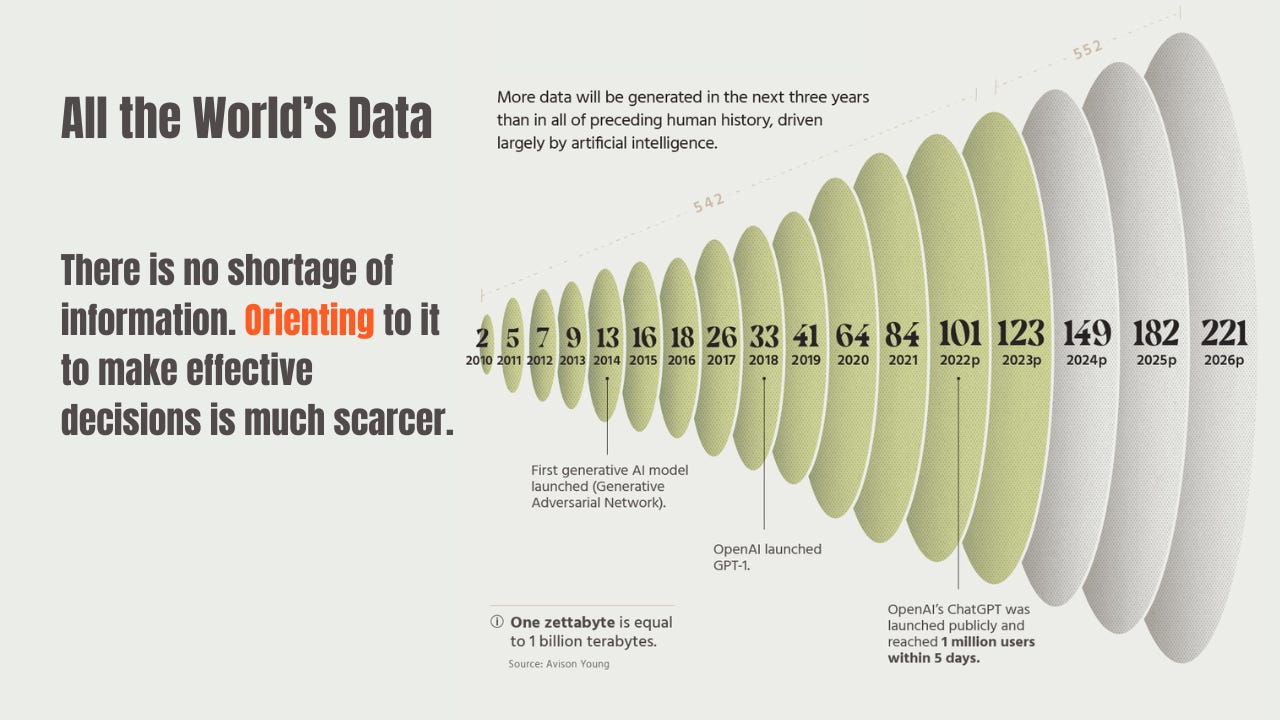

The Deeper Problem

What unites these failures—at the individual and institutional level—is that they are rarely failures of information access. They are failures of orientation. Modern systems accumulate data faster than they can revise the models used to interpret it, leaving decision-makers unable to coordinate effective action even as informational capacity grows.

As I noted in Strategic Blindness, this is not a new problem; it has reappeared throughout history.

The Roots of Disorientation

Models (both quantitative and broader worldview models) are not updated because those responsible for building and maintaining them are often rewarded for not updating them and punished for attempting institutionally disruptive updates, even when those updates are essentially correct, grounded in reality, or even vital for institutional survival.

The “shoot the messenger” problem is why Stanley Tucci’s character, Eric Dale, was fired in the opening scene of the movie Margin Call. As we later learn in the movie, Dale was trying to update the bank’s risk model to prevent catastrophe, but the bank punished him for even trying.

In the movie, the look of bafflement on Eric Dale’s face says it all: he’s struggling to understand why the institution that he’s trying to save is firing him for trying to save it. He was so busy modeling the relationships between the bank and the markets that he forgot to model his own relationship with the bank; more specifically, with particular senior leaders within the bank who saw his work as a threat to their positions.

If the bank had accepted Dale’s model, it would have been walking away from an extremely profitable source of income that it had been relying on for four years or more. In other words, accepting the reality of Dale’s model would have had very high costs for many senior people; they therefore decided to fire him before he could finish the work. At the individual level, their behavior (firing him) might have actually been rational.

How Does this Happen?

Under conditions of intense intra-elite rivalry, ambitious people make use of certain worldviews and models in order to secure promotion and status. When those worldviews and/or models start to break down, the people relying on them tend to reject the new data or interpretations because updating those models would lead to a personal loss of power and status. When groups form around these worldviews, refusal to update the model is seen as a heroic defense of group interests, rather than what it really is: a refusal to accept a shift in the underlying reality.

The “pre-rupture phase” can last for a very long time; years, even decades. The map no longer matches the territory but everybody is still getting rewarded as if it does. Model failure risk is often diffuse and hard to pinpoint as many models rely on other models in a highly complex web. This is a thorny topic I will return to in a future essay, when I intend to discuss the hundreds of billions of dollars of waste, incompetence and fraud embedded in the global markets for digital advertising and digital media.

There are many mechanisms that can be brought to bear to conceal the truth and keep the institutions stable. In a certain sense, the entire long-term debt cycle is one long epistemic drift that lasts for anywhere from 80 to 130 years. Over such a long time horizon, when, exactly, is it rational to stand up and say “Enough, we can’t go on like this!” For most insiders, the likely answer to that question is probably “never”. Even if the whole system looks like it’s about to fall, it’s hard to really know until the cascades and feedback loops start to accelerate beyond the point of no-return. Over-reacting before that happens looks a lot like “losing your nerve.”

The longer the drift goes on, the more rational it is for individual participants to assume that everything is fine, even if it is not. It’s hard to even know what “fine” and “not fine” means in systems that can drift for years. As the old saying goes, “The market can stay irrational longer than you can stay solvent”. The extremely long time lags between models being inaccurate and reality catching up make the process of updating these models politically fraught within institutions. In our current era, governments are increasingly unwilling to let reality catch up with markets where the largest firms are concerned, intensifying moral hazard by intervening to prevent mispriced risk from being suddenly repriced to more sustainable levels.

This does not just apply to finance and money: it applies to all kinds of risk and all kinds of major institutions: businesses, armies, government bureaucracies etc.

In 2006–2008, Michael Burry shorted the MBS (mortgage-backed securities) underlying the U.S. housing market using credit default swaps. He managed something many short sellers never do: he survived long enough for reality to assert itself. During long stretches of interim losses, many of his investors were furious, demanded withdrawals, and accused him of recklessness. When the trade finally paid off and made them extraordinarily wealthy amid a financial firestorm, many of his investor relationships were already broken. Burry closed his fund to outside capital soon after.

What Causes the Eventual Rupture?

The rupture only comes when the system is forced into a full update, as was the case in New York in 2008, and in France in the summer of 1940. One was a financial rupture, one was military, but the process was structurally similar. A forcing function arrives to puncture the fictions, not just for one or two Cassandras, but for everyone. When a bubble bursts it’s because it is no longer possible to ignore the accumulation of contradictions; a rapid update is imposed on a system that was unwilling to update itself voluntarily.

France’s nemesis in 1940 was the Wehrmacht; in 2008 the whole world had to face “The Great Recession”. I talked about the great recession in more detail as part of a recent essay:

Democracy’s Edge: Contested Reality

I dissect the 2008 crisis as a failure of institutional orientation: how warnings were visible, models froze, and only collapse forced revision.

In some cases, the anticipated rupture never comes, but preventing a rupture does require a gradual updating of models so that they return to a more grounded ability to predict and manage reality. Systemic rupture is not inevitable, but it is rare to avoid. Ruptures are prevented only when systems update their internal models before contradictions synchronize into a forcing event. The clearest historical near-misses include 19th-century United Kingdom, where incremental political and labor reforms diffused pressures that produced revolution elsewhere; the early-20th-century United States, where Progressive-era and New Deal reforms absorbed Gilded Age contradictions before they triggered systemic collapse; and postwar Scandinavia, where high-trust institutions enabled continuous model updating rather than episodic breakdown. In other cases—such as the early Roman Republic—rupture was delayed by exporting contradictions outward through wars of expansion, only to return later with greater force. The common thread is that rupture is definitively avoided only when elites and institutions accept loss of status, revise narratives early, and reward truth over face-saving; when they do not, the update eventually arrives disruptively and all at once.

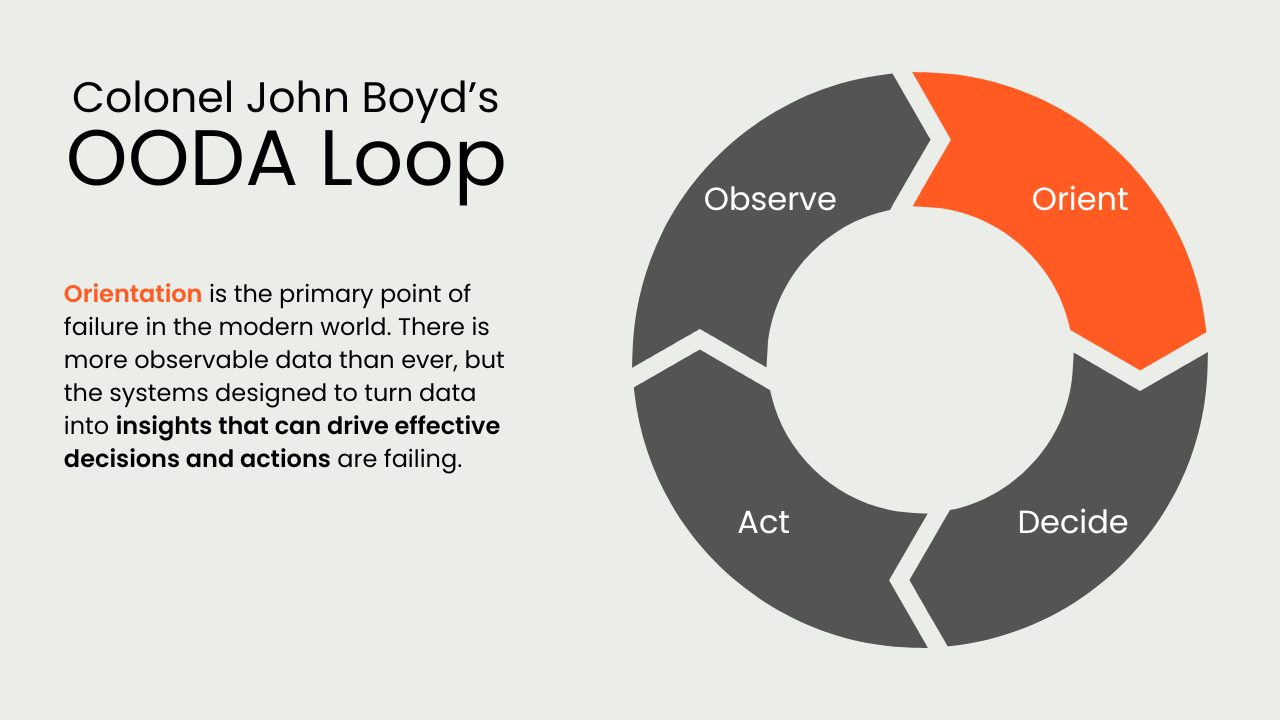

Mark Carney recently declared that the world is entering a period of global political rupture. He may eventually be proven right, but declarations alone do not force systemic updates. Ruptures are not recognized because influential figures announce them; they arrive when underlying contradictions become impossible to ignore systemwide. Despite Carney’s “pivot to China,” global financial markets continue to place far greater trust in the U.S. dollar than in China’s opaque, state-controlled financial system. Talk of rupture remains speculative rather than operative for the time being.

When Models Become Identity Tests

Why do models that are designed to follow reality stop doing their jobs? As factionalism intensifies among elites (something that happens more and more under intensifying conditions of elite overproduction), the models once used to interpret reality and guide collective action become litmus tests for factional membership among rival factions. Worldviews harden and become impervious to updating because adherence to a specific worldview is how loyalty to one’s faction is defined and enforced.

“I support the current thing” is a Bayesian joke about people who use worldviews and claims about reality to signal group membership, rather than to look honestly at reality and solve problems. The joke is funny/not funny because repurposing models/worldviews as “group membership signals” makes it harder to update those models while preserving group coherence, and therefore leads to less and less “reality-based” decisions over time. I sometimes refer to this phenomenon as “epistemic drift”. I have written about this “simulation process” with a framework I borrowed from Baudrillard in the essay below.

From Systems to Simulacra

How institutions drift over time from generativity (active problem solving) to ritualized performance.

Worldviews that harden into ingroup identity tests are dangerous precisely because they erode decision-making capacity so slowly that many people fail to notice it happening. When they do notice, they often dismiss it as “the way things are.” Over long time horizons, this allows unpriced risks to accumulate within institutions and societies.

Even if individuals want to update the models and worldviews, it becomes increasingly socially expensive to do so. Attempting updates carries individual risk of ostracism from social groups and organizations who have organized their hierarchies around those worldviews. Updating the models voluntarily can cause major power shifts both within and between institutions.

History is full of high-cost defections—figures like Brooksley Born, Michael Burry, or John Boyd—who were punished not for being wrong, but for attempting to force model updates before institutions were prepared to absorb the consequences.

For example: if the Republicans were to come out and say they’ve changed their minds about immigration enforcement and they are going to leave illegal immigrants alone inside the US, they will immediately lose the MAGA base they depend on for power. However, if they come out and say that the current mode of ICE enforcement is too dangerous and they are shifting to using the E-verify system to penalize businesses who knowingly hire illegal immigrants, rather than roaming around picking people up off the street, they lose many “business Republicans” who want to maintain a pool of cheap, off-the-books labor.

They are stuck in a structural, coalition-generated incoherence: they want their business supporters to have the economic benefits of cheap labor and they want their working class supporters to feel relief from the same cheap labor and a creeping sense of cultural erosion. As a result, they are pursuing a rather confusing and confrontational approach to enforcement as they try to walk the line between these contradictions.

The Democrats are just as incoherent on this topic; as with the Republicans, internal contradictions within their base of support constrain their ability to articulate a solution that does not further escalate conflict.

In practice, this incoherence is managed by both parties through moral signaling rather than policy clarity.

Rupture is not caused by ignorance; it is caused by institutions that make learning too expensive.

Conclusion

Modern institutional failures rarely stem from a lack of information. They arise when the models used to interpret reality become socially and politically too expensive to update. As incentives harden and factions consolidate around particular worldviews, updating those models carries unacceptable personal and institutional costs. Over time, institutions stop learning not because reality has become unknowable, but because acknowledging it has become too disruptive.

The difference between reform and rupture lies here. Systems that avoid rupture do so not because they are wiser or more virtuous, but because they preserve the capacity to revise their internal models before contradictions synchronize. They reward early correction, tolerate losses of status among elites, and treat contradiction as informational input rather than as a threat to legitimacy. Systems that fail to do this postpone updating until it can no longer be managed incrementally.

The deeper danger, then, is not data scarcity but institutional rigidity. Societies increasingly possess vast informational resources, yet lack institutions capable of admitting error while there is still time to adapt. When updating becomes socially impossible, rupture becomes inevitable; by the time it arrives, it no longer matters who understood the problem first.

We encourage your thoughts, comments, and questions.

This is a brilliant summary: Rupture is not caused by ignorance; it is caused by institutions that make learning too expensive.

Another pathway is the institution no longer has the mechanisms to learn or self-correct, as these have atrophied into "going through the motions" simulations rather than actual learning. This is the pathway of Model Collapse: simulations become hallucinations.

I have not left a comment before, however I am struck by your insight. The leaders have too much to lose by acknowledging the problems so just kick it forward